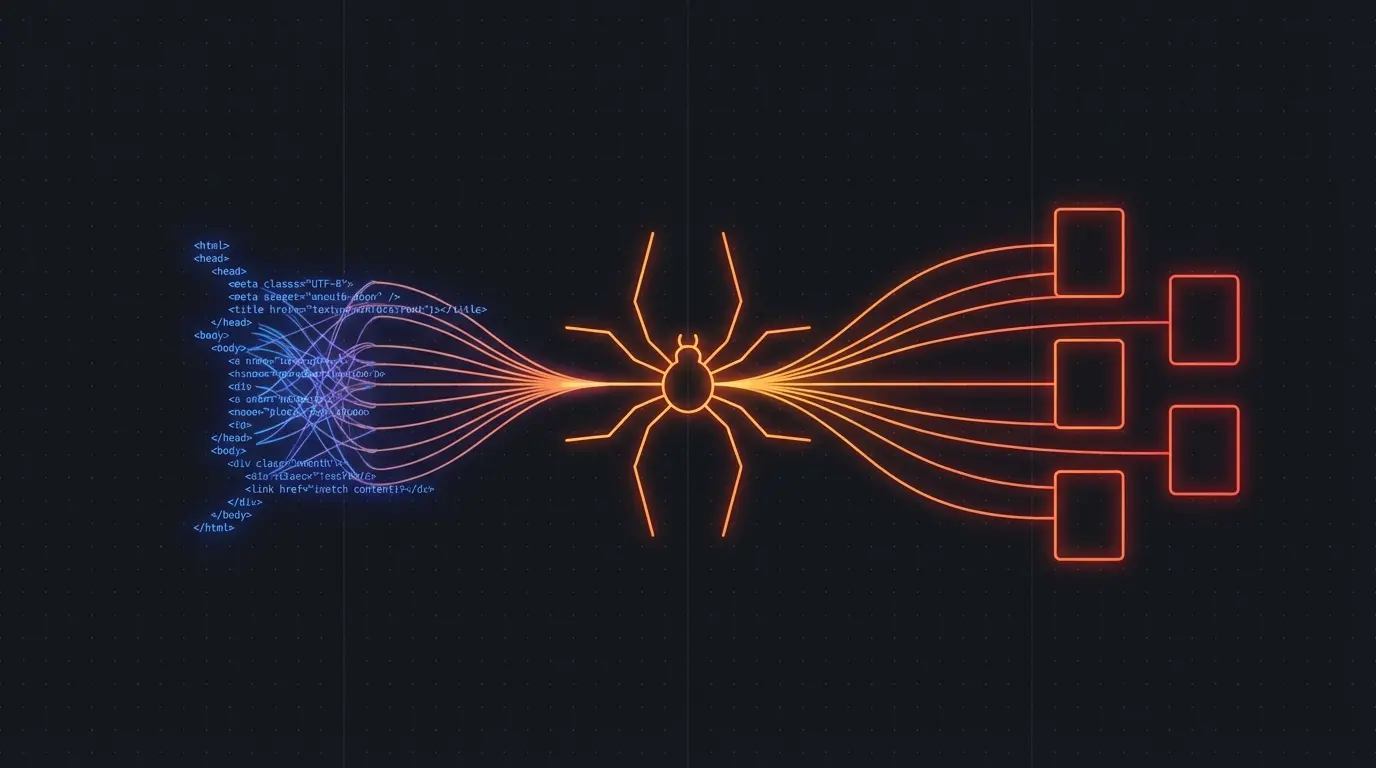

If you're building an AI application that needs web data — a RAG pipeline, an AI agent, a competitive intelligence tool — you have three main options for getting that data:

- DIY with Puppeteer/Playwright — full control, full headaches

- Beautiful Soup / Cheerio — fast for static HTML, useless for JS-heavy sites

- Managed API like Firecrawl — API call in, clean data out

Here's when to use each, from someone who's built commercial scraping infrastructure.

Beautiful Soup / Cheerio

Best for: Static HTML sites with simple structure.

These libraries parse HTML and let you select elements with CSS selectors or XPath. They're fast, lightweight, and work great — as long as the content exists in the HTML source.

The catch: Most modern websites render content with JavaScript. SPAs, React sites, dynamically loaded content — none of it shows up in the raw HTML. Beautiful Soup sees an empty <div id="root"></div> and that's it.

Maintenance cost: Low for static sites. But you're writing site-specific selectors that break whenever the site updates its markup. For each new site, you're writing a new parser.

Puppeteer / Playwright

Best for: When you need full browser control and are willing to maintain the infrastructure.

These tools launch a real browser, render JavaScript, and give you the fully rendered DOM. You can handle infinite scroll, click through pagination, and extract content from SPAs.

The catch: Running headless browsers at scale is an infrastructure problem. Memory leaks. Zombie processes. Browser crashes. Proxy rotation. You need a job queue, retry logic, and someone to maintain it all. I know — I lived this for years with Pageripper.

Maintenance cost: High. Browser updates break things. Sites add bot detection. Your selectors rot. Budget 30-60% of initial build time for ongoing maintenance.

Firecrawl

Best for: AI applications that need clean, structured data from websites without the infrastructure overhead.

Firecrawl is a managed API that handles JavaScript rendering, content extraction, and boilerplate removal. You send it a URL, it returns clean markdown and structured JSON.

import Firecrawl from '@mendable/firecrawl-js'

const app = new Firecrawl({ apiKey: 'fc-...' })

// Single page

const page = await app.scrapeUrl('https://example.com/blog/post')

console.log(page.markdown) // Clean content, no boilerplate

// Entire site

const site = await app.crawlUrl('https://docs.example.com', {

limit: 100,

scrapeOptions: { formats: ['markdown', 'html'] }

})

The catch: It's a paid API. If you're scraping 10 pages a month, it's overkill. If you're scraping thousands of pages for AI applications, it saves you from building and maintaining scraping infrastructure.

Maintenance cost: Near zero. No selectors to maintain, no browsers to manage, no infrastructure to scale.

Try Firecrawl FreeThe Comparison

Setup time:

- Beautiful Soup: 30 minutes (static sites only)

- Puppeteer: 2-4 hours (basic), 2-4 weeks (production-grade)

- Firecrawl: 5 minutes

JavaScript rendering:

- Beautiful Soup: ❌ No

- Puppeteer: ✅ Yes (you manage the browsers)

- Firecrawl: ✅ Yes (managed for you)

Clean content extraction:

- Beautiful Soup: Manual (write selectors per site)

- Puppeteer: Manual (write selectors per site)

- Firecrawl: Automatic (AI-powered, works on any site)

Scaling:

- Beautiful Soup: Easy (it's just HTTP requests)

- Puppeteer: Hard (browser instances are heavy)

- Firecrawl: Easy (it's an API call)

Ongoing maintenance:

- Beautiful Soup: Medium (selectors break)

- Puppeteer: High (browsers + selectors + infrastructure)

- Firecrawl: None

My Recommendation

If you're building AI applications — RAG pipelines, AI agents, dataset builders — use Firecrawl. The engineering time you save on scraping infrastructure is better spent on your actual product.

If you're scraping a single static site with a stable structure, Beautiful Soup is fine. If you need full browser automation for complex interactions (logging in, filling forms, navigating multi-step flows), Puppeteer is the right tool.

But for "give me clean data from these URLs" — which is 90% of AI-related scraping — a managed API wins.

Try Firecrawl FreeRelated:

Discussion

Giscus