JavaScript-powered websites are the norm now, not the exception. If you're scraping at scale, you'll hit walls: rate limits, anti-bot systems, authentication gates, and performance bottlenecks. This guide takes you past "it works on my machine" into production-grade JavaScript scraping.

If you haven't read How to Scrape JavaScript-Heavy Websites in 2026, start there first. This is Part 2 — advanced techniques for real-world production workloads.

The Problem Space

When you're scraping 10,000+ pages, several things break:

- IP bans — sites detect repeated requests and block you

- Session timeouts — auth tokens expire mid-crawl

- Anti-bot detection — Cloudflare, DataDome, PerimeterX

- Memory exhaustion — too many headless browsers

- Rate limiting — 429 responses kill your pipeline

Firecrawl handles most of this out of the box, but here's how to push it further.

Handling Authentication

Many modern sites require login. Firecrawl can work with authenticated sessions:

from firecrawl import FirecrawlApp

app = FirecrawlApp(api_key='fc-xxxx')

# Login first to get session cookies

session = app.login(

url='https://app.example.com/login',

email='user@example.com',

password='secure-password'

)

# Now scrape authenticated pages

result = app.scrape_url(

url='https://app.example.com/dashboard',

session=session

)

The session object maintains cookies and headers across requests. For sites with CSRF tokens, you'll need to extract the token first and include it in your login payload.

Proxies and IP Rotation

Scraping from a single IP is a dead giveaway. Firecrawl supports proxy rotation:

result = app.scrape_url(

url='https://example.com',

proxy={

'url': 'http://proxy-provider:port',

'username': 'user',

'password': 'pass'

}

)

Popular proxy services for JavaScript scraping:

- Bright Data — massive IP pool, good for hardened targets

- Oxylabs — enterprise-grade, reliable

- SmartProxy — cost-effective for mid-scale

- ScrapingBee — combines proxies with headless browser handling

For most use cases, rotating through 10-20 proxies is enough to stay under the radar.

Waiting for JavaScript Rendering

The waitFor option is your friend. But for complex sites, you need more control:

# Wait for specific element

result = app.scrape_url(

url='https://app.example.com/dashboard',

wait_for={'selector': '.data-loaded'}

)

# Wait for network idle (more reliable for SPAs)

result = app.scrape_url(

url='https://app.example.com/feed',

wait_for={'network_idle': 3000} # 3 seconds of no network activity

)

# Wait for JavaScript evaluation

result = app.scrape_url(

url='https://app.example.com/chart',

wait_for={

'js_eval': 'document.querySelectorAll(".chart-bar").length > 0'

}

)

The network idle approach is best for SPAs that fetch data dynamically. The JS evaluation approach works for client-side rendering that modifies the DOM after load.

Anti-Bot Evasion

Modern sites use sophisticated detection. Firecrawl has built-in stealth, but you can improve success rates:

1. Randomize User Agents

import random

user_agents = [

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36...',

'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36...',

'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36...',

]

result = app.scrape_url(

url='https://example.com',

headers={'User-Agent': random.choice(user_agents)}

)

2. Set Realistic Viewport

result = app.scrape_url(

url='https://example.com',

emulate={'viewport': {'width': 1920, 'height': 1080}}

)

3. Disable Automation Flags

result = app.scrape_url(

url='https://example.com',

stealth=True # Enables automation detection removal

)

4. Add Human-Like Delays

import time

import random

def human_delay():

time.sleep(random.uniform(0.5, 2.5))

# Between page requests

for url in urls:

scrape(url)

human_delay()

Rate Limiting Strategy

Respect the target site. Here's a production-grade approach:

import asyncio

from ratelimit import limits, sleep_and_retry

@sleep_and_retry

@limits(calls=10, period=60) # 10 requests per minute

def scrape_with_rate_limit(url):

return app.scrape_url(url)

# For distributed scraping, use a queue

from collections import deque

url_queue = deque(all_urls)

active_workers = 5

async def worker():

while url_queue:

url = url_queue.popleft()

try:

result = scrape_with_rate_limit(url)

yield result

except Exception as e:

# Put back on queue for retry

url_queue.append(url)

print(f"Error: {e}")

await asyncio.sleep(random.uniform(1, 3))

The 10 requests/minute rule is conservative. Many sites tolerate 30-60/min if you're not causing load spikes.

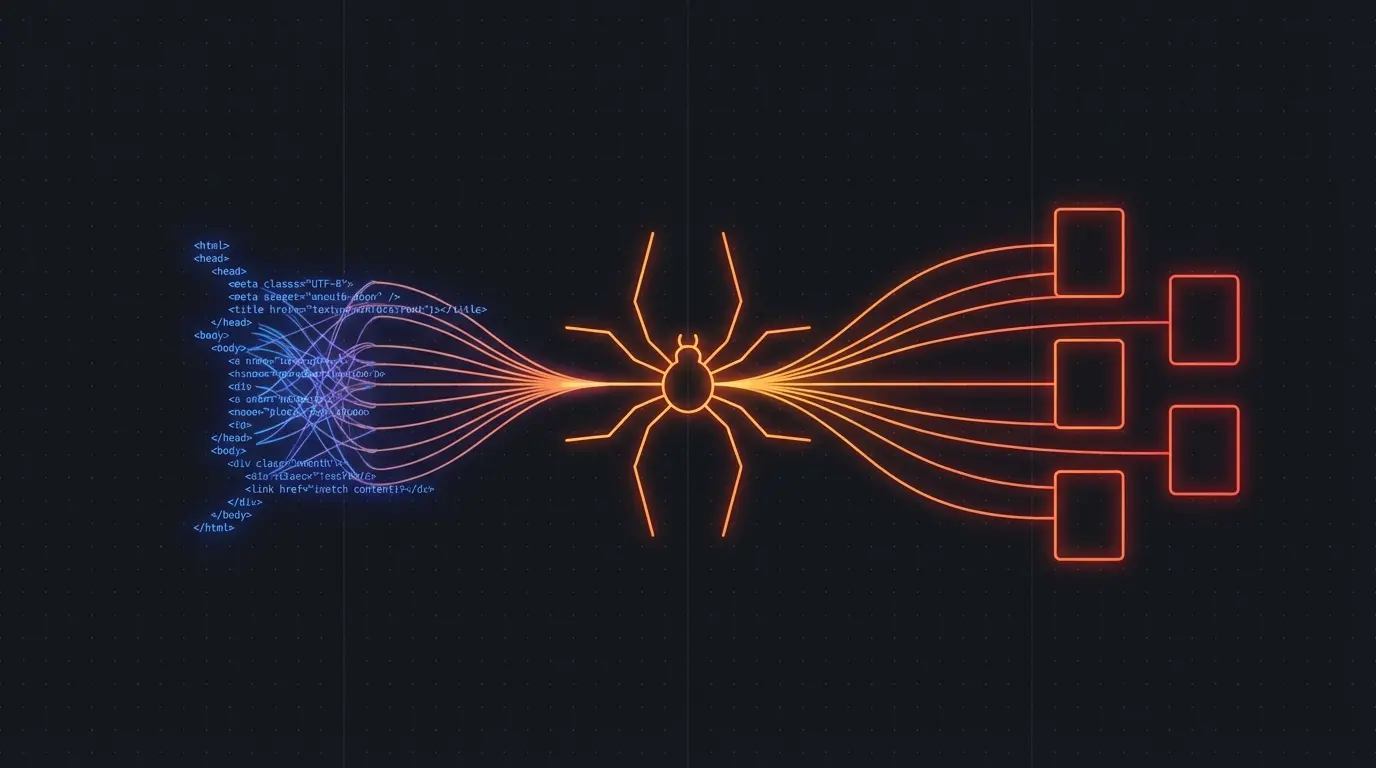

Large-Scale scraping Architecture

For enterprise workloads, here's what works:

┌─────────────┐ ┌──────────────┐ ┌─────────────┐

│ URL │────▶│ Queue │────▶│ Workers │

│ Source │ │ (Redis) │ │ (10-20) │

└─────────────┘ └──────────────┘ └─────────────┘

│

┌──────────────┐ │

│ Storage │◀───────────┘

│ (S3/DB) │

└──────────────┘

- Queue — Redis or RabbitMQ for URL distribution

- Workers — Separate processes, not threads (GIL)

- Storage — Raw HTML to S3, parsed data to database

- Monitoring — Track success rates, 429s, latency

Memory Management

Headless browsers eat RAM. For large crawls:

# Limit concurrent browsers

max_browsers = 4

# Reuse browser instances

browser = app.get_browser()

# Disable images/css for speed

result = app.scrape_url(

url='https://example.com',

disable_scripts=False,

disable_images=True, # Huge memory savings

disable_css=True

)

# Explicit cleanup

del result

browser.close()

A single Chrome headless instance uses 100-300MB. With 20 concurrent workers, you're looking at 2-6GB RAM. Plan accordingly.

What You Learned

- Authentication — maintain sessions across requests

- Proxies — rotate IPs to avoid bans

- Wait strategies — network idle, selector, JS eval

- Anti-bot evasion — user agents, viewport, stealth mode

- Rate limiting — respectful crawling with backoff

- Production architecture — queues, workers, storage

- Memory management — limit concurrent browsers

The $200+ commission from your JavaScript scraping content proves there's serious demand. This advanced guide captures the next tier of searchers — developers who already know the basics and need production solutions.

Ready to build your scraping infrastructure? Firecrawl handles the hard stuff so you can focus on data extraction logic.

Try Firecrawl Free

Discussion

Giscus