Your literature review needs to cover 847 papers across 12 journals, 6 conference proceedings, and 15 institutional repositories. The citation network spans three decades of research. Manual collection would take months. Your thesis committee meets next month.

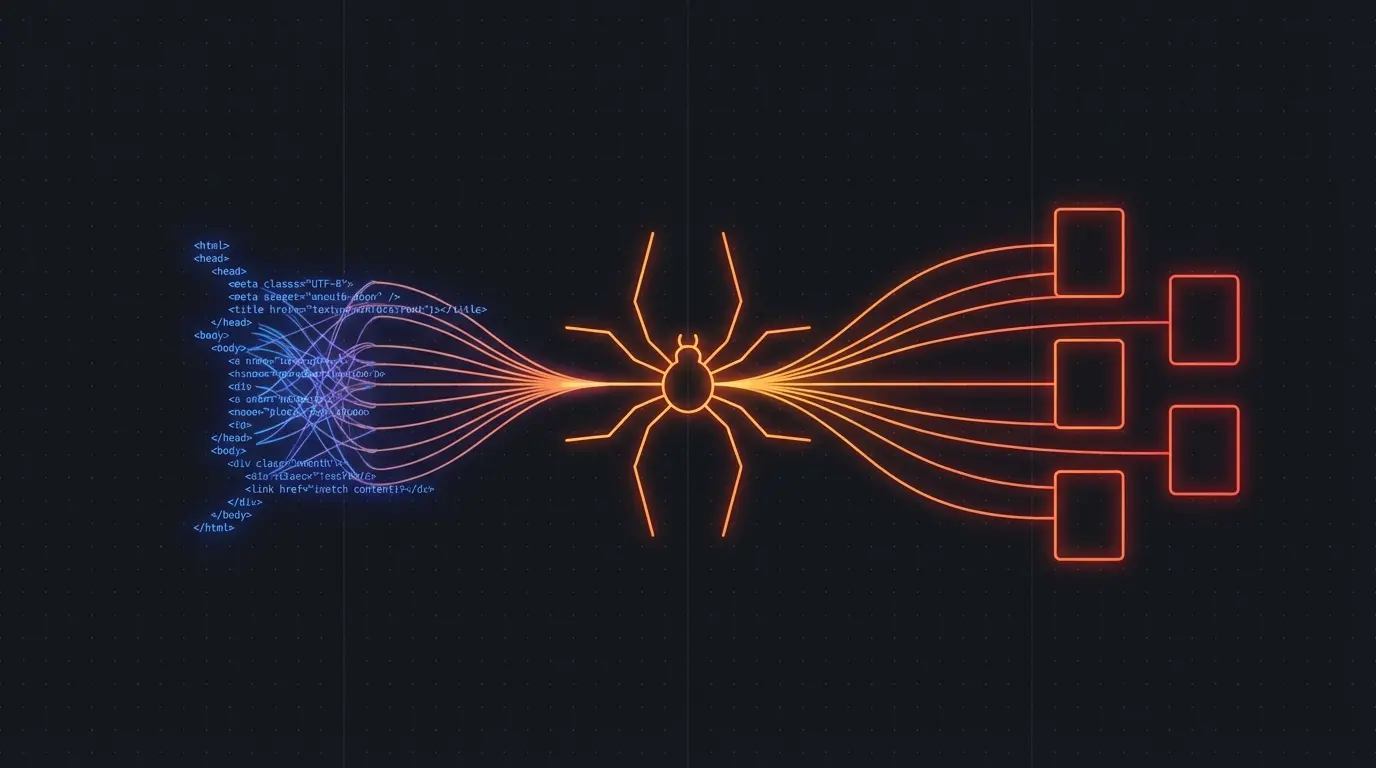

Academic research in 2026 requires systematic data collection at unprecedented scale. Web scraping isn't just a convenience — it's essential infrastructure for comprehensive literature analysis and evidence-based research.

Literature Review at Scale

Traditional literature review stops when manual search becomes impractical. Systematic web scraping removes that constraint:

Comprehensive journal coverage. Academic publishers organize content differently, use inconsistent metadata formats, and limit bulk downloads. Scraping normalizes access across journals, conferences, and preprint servers.

Citation network analysis. Following citation chains manually misses cross-disciplinary connections and recent work. Automated collection captures the full citation graph for network analysis, influence tracking, and gap identification.

Gray literature and institutional knowledge. University repositories, government research labs, and industry publications contain crucial work that doesn't appear in traditional databases. Systematic crawling captures this distributed knowledge base.

Temporal analysis of research trends. Track how research topics, methodologies, and terminology evolve over time by scraping publication metadata across decades of academic work.

Building a Research Pipeline

Firecrawl handles the technical complexity of academic website structures:

import Firecrawl from '@mendable/firecrawl-js'

const app = new Firecrawl({ apiKey: 'fc-...' })

// Target academic publishers and repositories

const academicSources = [

'https://arxiv.org/search/?query=machine+learning',

'https://journals.acm.org/browse-by-subject',

'https://repository.university.edu/collections',

'https://conference.ieee.org/proceedings'

]

for (const source of academicSources) {

const result = await app.crawlUrl(source, {

limit: 500,

scrapeOptions: {

formats: ['markdown'],

onlyMainContent: true

}

})

// Extract publication metadata

const papers = result.data.map(page => ({

title: extractTitle(page.markdown),

authors: extractAuthors(page.markdown),

abstract: extractAbstract(page.markdown),

citations: extractCitations(page.markdown),

publicationDate: extractDate(page.metadata),

sourceUrl: page.metadata?.sourceURL

}))

await storePapers(papers)

}

Run this weekly to maintain current awareness of your research area. Set up alerts for new publications from key authors, institutions, or conferences.

Try Firecrawl FreeCitation Analysis and Impact Tracking

Understanding research impact requires analyzing citation patterns across multiple databases:

Google Scholar profiles. Scrape author profiles to track career trajectories, collaboration networks, and research evolution. Identify rising stars and established experts by citation growth patterns.

Conference proceedings and workshop papers. Many innovative ideas appear first in workshop papers and conference presentations before full journal publication. Systematic collection captures early signals of research directions.

Cross-disciplinary citation tracking. Important ideas often jump between fields. Scraping citations across disciplines reveals knowledge transfer patterns that single-domain searches miss.

// Track citation impact across multiple sources

const authorProfile = await app.scrapeUrl('https://scholar.google.com/citations?user=XXXXX', {

formats: ['markdown']

})

const papers = extractPaperList(authorProfile.markdown)

for (const paper of papers) {

const citations = await app.scrapeUrl(paper.citedByUrl, {

formats: ['markdown']

})

const citingPapers = extractCitingPapers(citations.markdown)

await analyzeCitationContext(paper, citingPapers)

}

This reveals how ideas propagate through academic communities and identifies influential work before traditional impact metrics catch up.

Data Collection for Empirical Research

Many research questions require collecting data from websites, online platforms, and digital archives:

Social media research. Study communication patterns, information diffusion, and community formation by systematically collecting public posts, user profiles, and interaction networks.

Economic and financial data. Track market behavior, pricing patterns, and regulatory changes by scraping financial websites, government databases, and industry reports.

Public policy and governance studies. Monitor legislative processes, policy implementations, and public comment periods by collecting data from government websites and regulatory agencies.

Digital humanities and cultural studies. Analyze digital culture, online communities, and cultural production by systematically collecting content from blogs, forums, and cultural websites.

Try Firecrawl FreeReproducibility and Data Management

Academic scraping requires special attention to reproducibility and data integrity:

Version control for scraped data. Web content changes constantly. Maintain versioned datasets with collection timestamps, source URLs, and scraping parameters to ensure reproducible analysis.

const crawlMetadata = {

timestamp: new Date().toISOString(),

firecrawlVersion: '1.0.0',

crawlParameters: {

limit: 500,

formats: ['markdown'],

scrapeOptions: { onlyMainContent: true }

},

sourceUrls: academicSources

}

await storeCrawlMetadata(crawlMetadata)

Documentation of collection methodology. Include scraping methodology, selection criteria, and data processing steps in research documentation. This enables replication and helps reviewers assess data quality.

Ethical compliance. Check institutional IRB requirements for web scraping research. Some universities classify systematic web data collection as human subjects research requiring approval.

Data sharing and preservation. Plan for data sharing requirements from funding agencies and journals. Consider privacy implications of collected data and develop appropriate de-identification procedures.

Specialized Academic Applications

Different research domains benefit from domain-specific scraping strategies:

Medical and health research. Scrape clinical trial databases, health agency reports, and medical literature for systematic reviews and meta-analyses. Track drug approval processes and safety reporting patterns.

Legal and policy research. Collect court decisions, regulatory filings, and legislative records to analyze legal trends, policy outcomes, and regulatory capture patterns.

Environmental and climate research. Gather environmental monitoring data, policy documents, and research publications to track climate impacts, policy responses, and scientific consensus evolution.

Historical and archival research. Digitized archives, newspaper databases, and historical websites contain vast amounts of historical data suitable for computational historical analysis.

Academic web scraping amplifies research capability without replacing scholarly rigor. The goal is comprehensive data collection that enables deeper analysis, not shortcuts around careful methodology.

Related:

Discussion

Giscus