You need to scrape 500 websites for training data. Your AI pipeline needs clean, structured content that LLMs can process effectively. You've narrowed it down to two platforms: Firecrawl and Apify.

Both are powerful. Both handle JavaScript-heavy sites and scale to enterprise workloads. But they're optimized for different use cases. The right choice depends on your specific AI workflow requirements.

Architecture and Approach

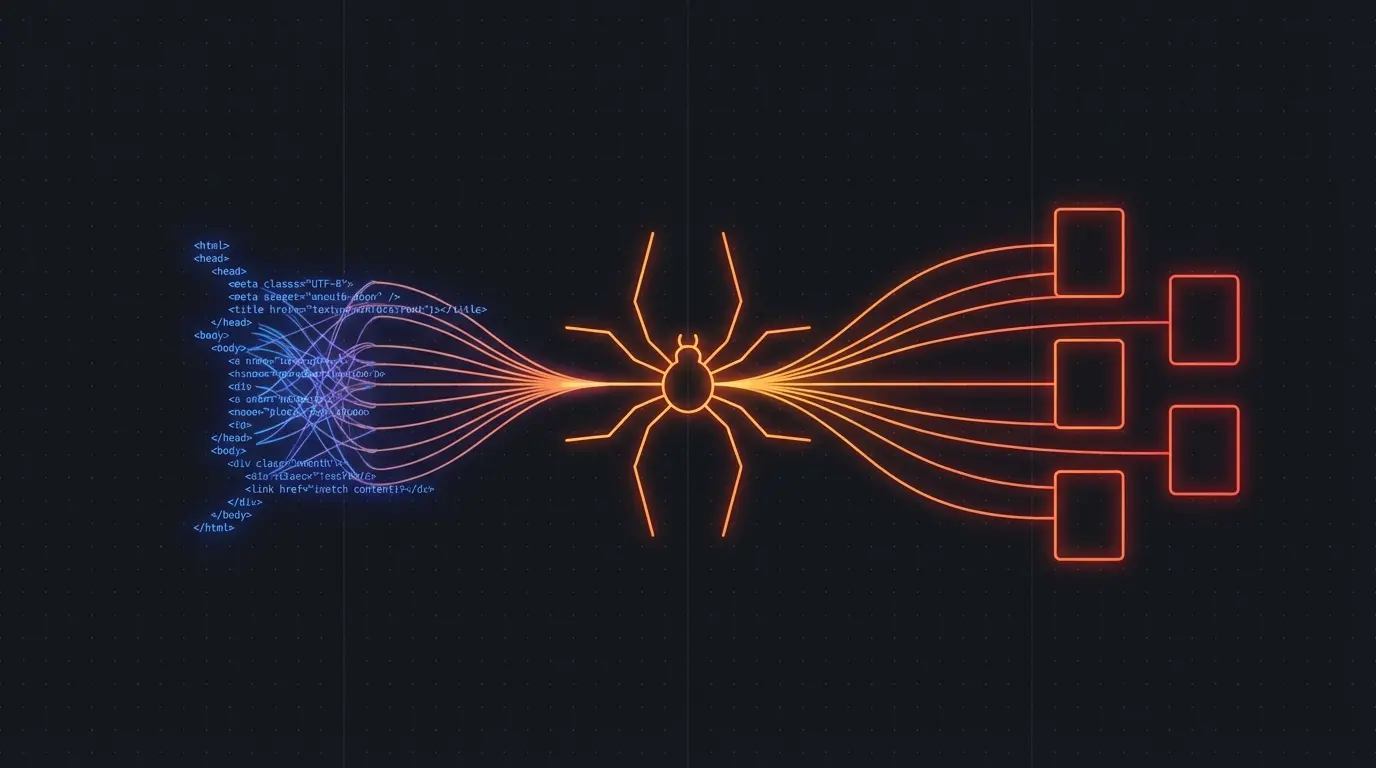

Firecrawl is built specifically for AI and LLM workflows. It converts any website into clean markdown, handles anti-bot measures automatically, and provides structured output that LLMs can process without additional cleaning.

Apify is a general-purpose web scraping and automation platform. It provides a marketplace of pre-built scrapers, supports custom JavaScript actors, and offers extensive configuration options for complex scraping scenarios.

The fundamental difference: Firecrawl optimizes for AI consumption, while Apify optimizes for flexibility and customization.

Getting Started Experience

Firecrawl: AI-First Simplicity

import Firecrawl from '@mendable/firecrawl-js'

const app = new Firecrawl({ apiKey: 'fc-...' })

// Scrape a single page

const result = await app.scrapeUrl('https://example.com', {

formats: ['markdown', 'html'],

onlyMainContent: true

})

console.log(result.markdown) // Clean, LLM-ready content

// Crawl an entire site

const crawlResult = await app.crawlUrl('https://example.com', {

limit: 100,

scrapeOptions: { formats: ['markdown'] }

})

crawlResult.data.forEach(page => {

console.log(`${page.metadata.title}: ${page.markdown.substring(0, 100)}...`)

})

Firecrawl works out of the box. No configuration, no custom actors, no JavaScript debugging. You get clean markdown that LLMs can process immediately.

Apify: Power Through Complexity

import { ApifyApi } from 'apify-client'

const client = new ApifyApi({ token: 'apify_api_...' })

// Use a pre-built website scraper

const run = await client.actor('apify/website-content-crawler').call({

startUrls: [{ url: 'https://example.com' }],

maxCrawlPages: 100,

removeElementsCssSelector: 'nav, footer, aside',

transformContent: 'markdown'

})

const items = await client.dataset(run.defaultDatasetId).listItems()

items.items.forEach(item => {

console.log(item.text) // Requires additional processing for LLM consumption

})

Apify requires more setup but gives you access to thousands of pre-built scrapers and the ability to write custom JavaScript actors for specialized needs.

Try Firecrawl FreeContent Quality for AI Workflows

The biggest differentiator is content quality for LLM processing:

Firecrawl's AI-Optimized Output

Firecrawl automatically handles:

- Clean markdown formatting that LLMs parse reliably

- Main content extraction that filters out navigation, ads, and boilerplate

- Structured metadata including titles, descriptions, and canonical URLs

- Text normalization that handles encoding issues and special characters

The output is immediately suitable for RAG systems, training data preparation, and LLM analysis without additional processing.

Apify's Flexible but Raw Output

Apify gives you complete control but requires more post-processing:

- HTML, text, or custom formats depending on the actor you choose

- Full page content including navigation, ads, and sidebar content unless filtered

- Configurable extraction rules that require CSS selector knowledge

- Custom post-processing needed to prepare content for LLM consumption

For AI workflows, you'll need to build additional cleaning and normalization steps.

Handling Anti-Bot Measures

Both platforms handle bot detection, but with different approaches:

Firecrawl handles anti-bot measures transparently. It rotates user agents, manages browser fingerprinting, and solves CAPTCHAs automatically. You never see the complexity.

Apify gives you fine-grained control over bot detection strategies. You can configure proxy rotation, browser fingerprints, and custom headers. More control means more complexity when things go wrong.

For AI training data collection where you need reliability over customization, Firecrawl's transparent approach is preferable.

Pricing and Scale

Firecrawl pricing (as of March 2026):

- Starter: $29/month for 2,000 pages

- Standard: $99/month for 10,000 pages

- Scale: $299/month for 50,000 pages

- Enterprise: Custom pricing for higher volumes

Apify pricing:

- Free: $5 monthly credits (~1,000-2,000 pages depending on actor)

- Starter: $49/month for $49 in credits

- Team: $149/month for $149 in credits

- Enterprise: Custom pricing

Apify's credit system is more complex but potentially more cost-effective for high-volume, simple scraping. Firecrawl's per-page pricing is more predictable for AI workflows.

Try Firecrawl FreeUse Case Fit

Choose Firecrawl When:

- Building AI applications that need clean, structured content

- Training LLMs or building RAG systems with web data

- Rapid prototyping where time-to-first-result matters more than customization

- Content monitoring where you need reliable, consistent output formats

- Non-technical teams need to implement web scraping without JavaScript expertise

Choose Apify When:

- Complex, custom scraping logic that requires JavaScript programming

- Existing marketplace actors perfectly match your use case

- E-commerce or price monitoring where specialized scrapers provide better data

- High-volume, cost-sensitive projects where credit optimization matters

- Workflow automation beyond scraping (form filling, social media automation, etc.)

Integration with AI Toolchains

Firecrawl Integration

import firecrawl

from langchain.document_loaders import WebBaseLoader

from langchain.text_splitter import RecursiveCharacterTextSplitter

# Direct integration with LangChain

app = firecrawl.FirecrawlApp(api_key="fc-...")

result = app.scrape_url("https://example.com")

# Content is already clean and structured

documents = [{"page_content": result['markdown'], "metadata": result['metadata']}]

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000)

splits = text_splitter.split_documents(documents)

# Ready for vector database ingestion

Apify Integration

import apify_client

from langchain.document_loaders import ApifyDatasetLoader

# More complex integration requiring post-processing

client = apify_client.ApifyClient("apify_api_...")

run = client.actor("apify/website-content-crawler").call({"startUrls": [{"url": "https://example.com"}]})

loader = ApifyDatasetLoader(dataset_id=run["defaultDatasetId"], dataset_mapping_function=lambda x: x["text"])

documents = loader.load()

# Requires additional cleaning for LLM consumption

cleaned_documents = [clean_content(doc) for doc in documents]

The Verdict

For AI workflows specifically, Firecrawl provides significant advantages in content quality, setup simplicity, and integration speed. You'll spend less time on data preprocessing and more time on AI development.

Apify remains the better choice for complex, custom scraping scenarios or when you need specialized marketplace actors. But for straightforward content collection for AI applications, Firecrawl's AI-first approach delivers better results with less effort.

The decision often comes down to team composition: technical teams comfortable with JavaScript and custom configuration may prefer Apify's flexibility. Teams focused on AI development rather than scraping implementation benefit from Firecrawl's simplicity.

Related:

Discussion

Giscus